The AI Control Plane

for Enterprises

One endpoint, every LLM. NextBrain gives you governance, observability, intelligent routing, and failover across GPT-4, Claude, AWS Bedrock, and your own fine-tuned models.

One endpoint, every LLM. NextBrain gives you governance, observability, intelligent routing, and failover across GPT-4, Claude, AWS Bedrock, and your own fine-tuned models.

Every team picked a different model. Every integration is bespoke. No visibility, no governance, no failover.

Which team is calling which model, for what, at what cost?

Data residency, access controls, audit trails — fragmented across every provider.

Provider outage = 2am manual scramble to reroute traffic.

Simple tasks running on premium models. No intelligent routing.

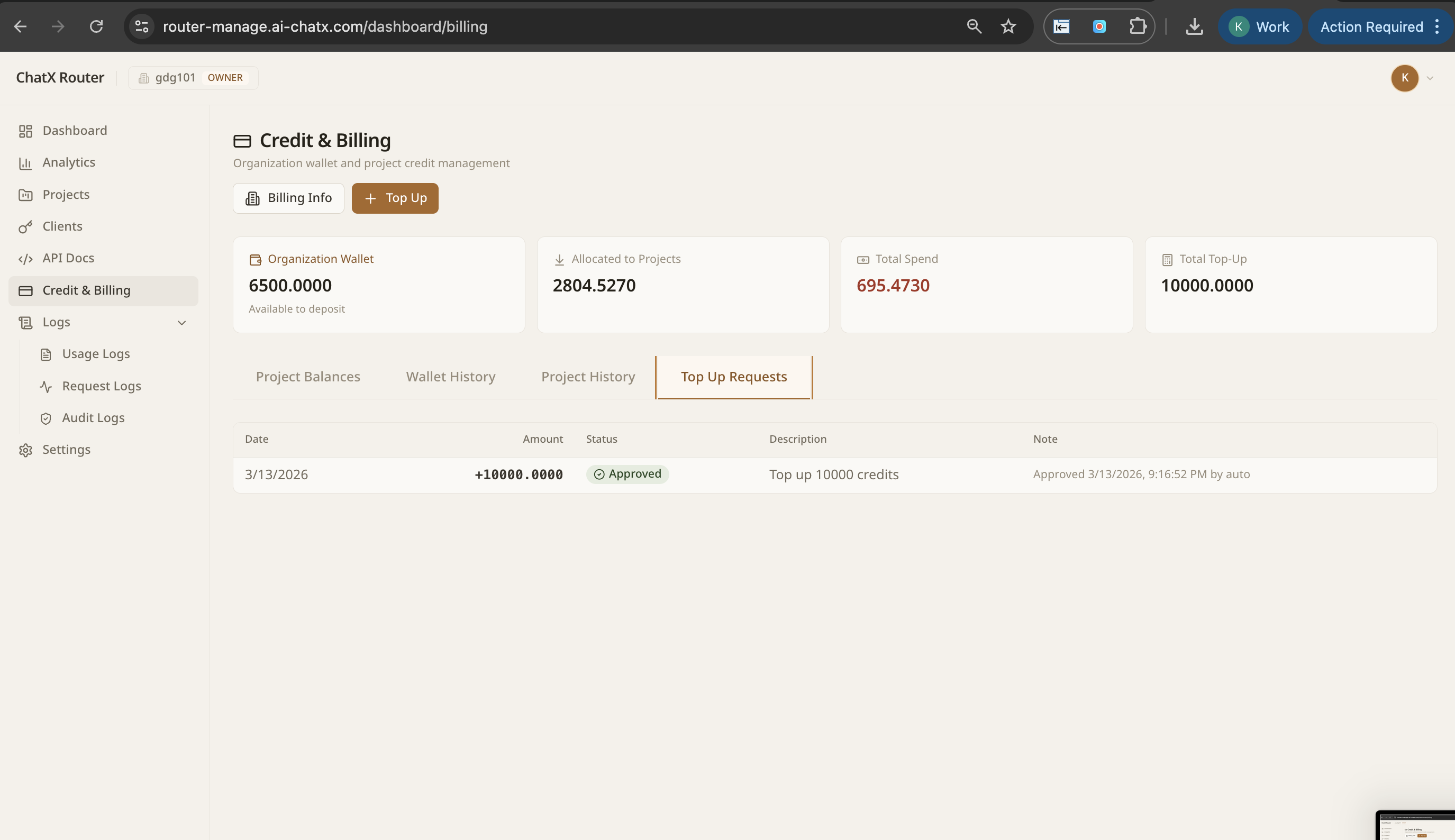

AWS now officially supports Claude Desktop (Cowork) with third-party inference gateways. That means every AI call from every employee's desktop — instead of going directly to Anthropic — can be routed through NextBrain.

One configuration. Full visibility. Your internal policy, enforced automatically.

Every token used by every employee's Claude Desktop is visible, attributed, and budget-capped. No surprises at the end of the month.

Route sensitive work to compliant models. Block unapproved providers. Enforce data residency at the gateway — before the request leaves your control.

Employees stay in their desktop flow. IT gets a single control plane. Real work happens, real usage is tracked, real budgets are respected — all at once.

Redirect Claude Desktop traffic to Bedrock for data residency. Employees use the same interface. Your compliance team gets what they need.

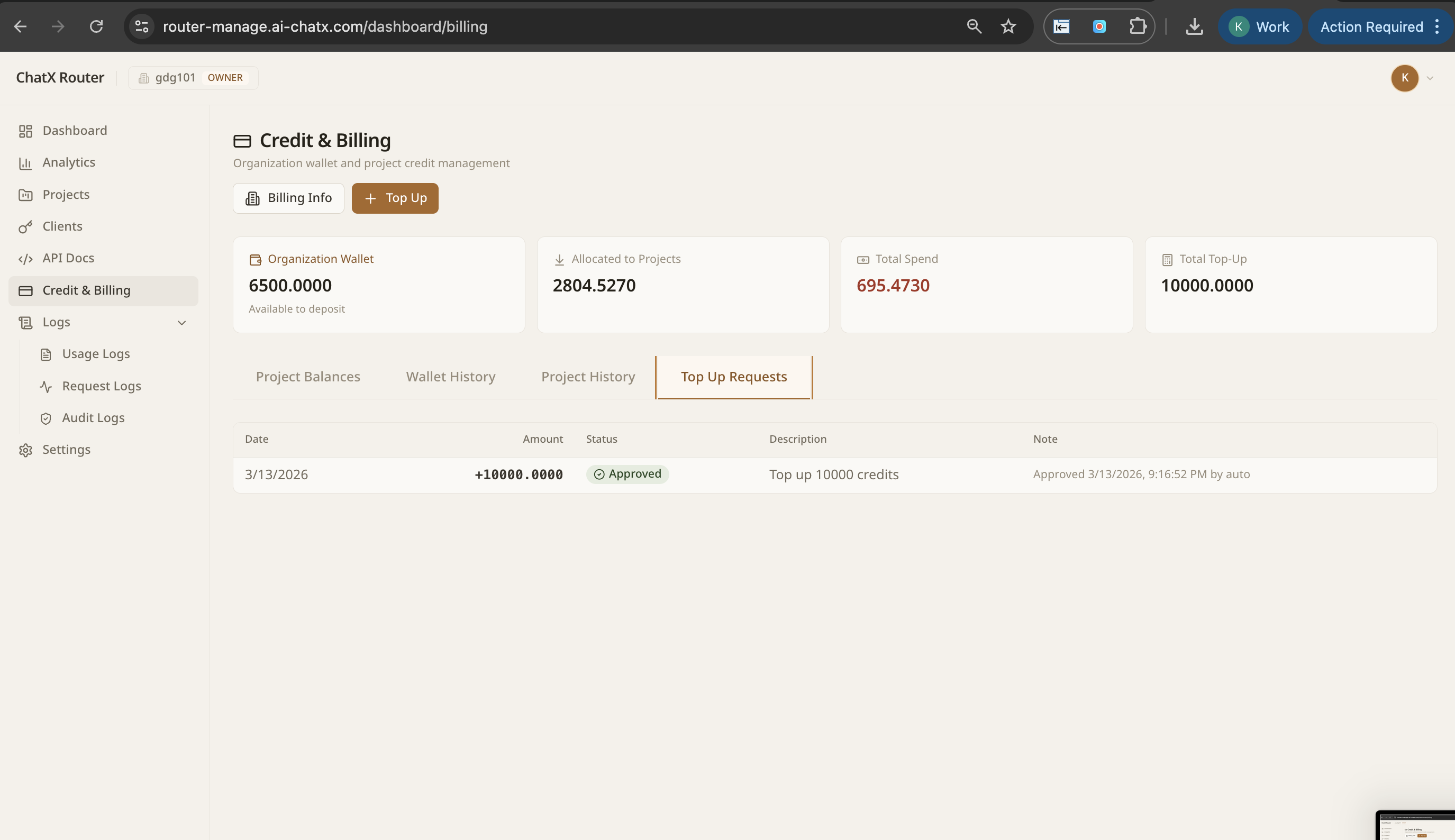

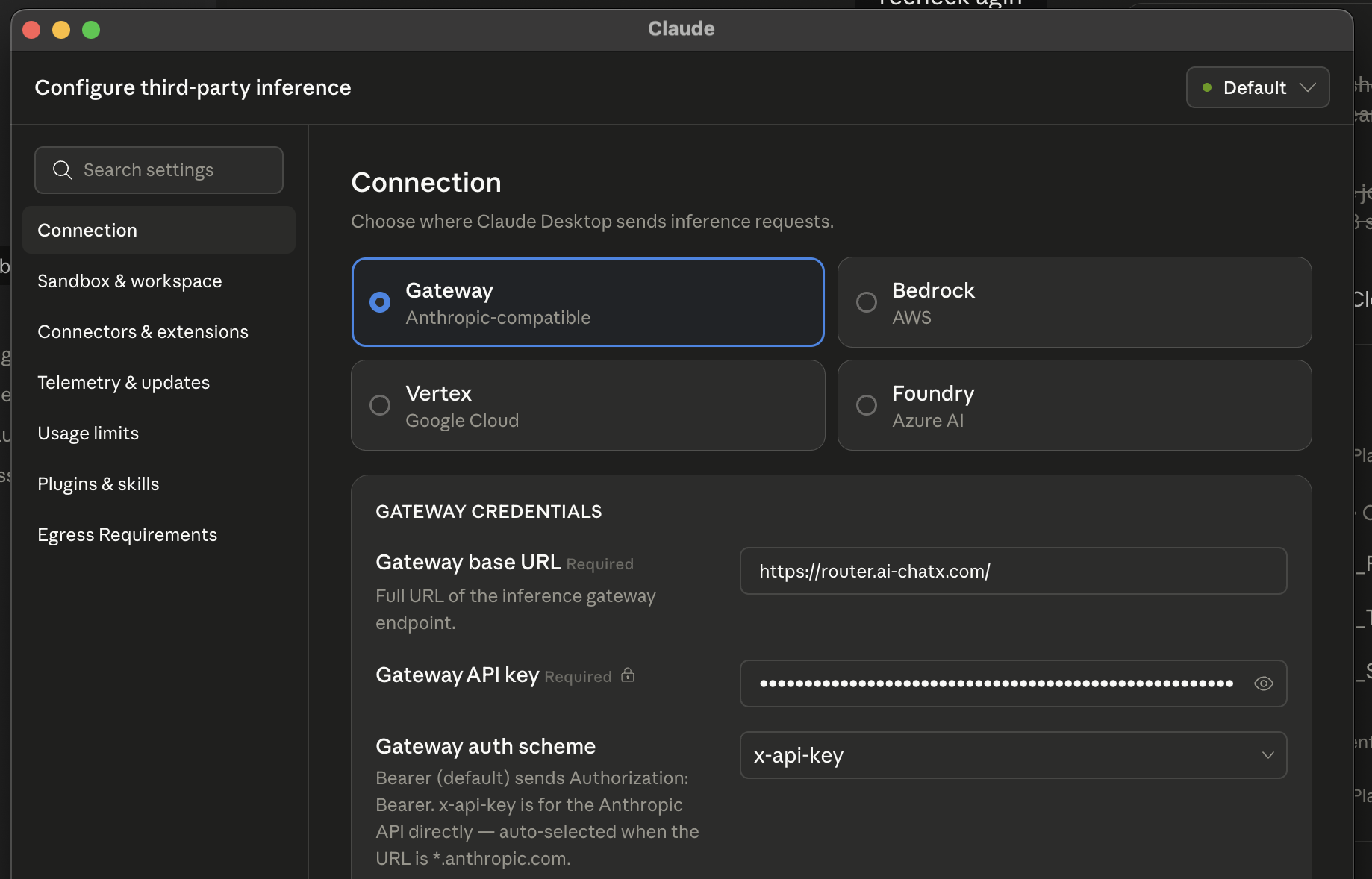

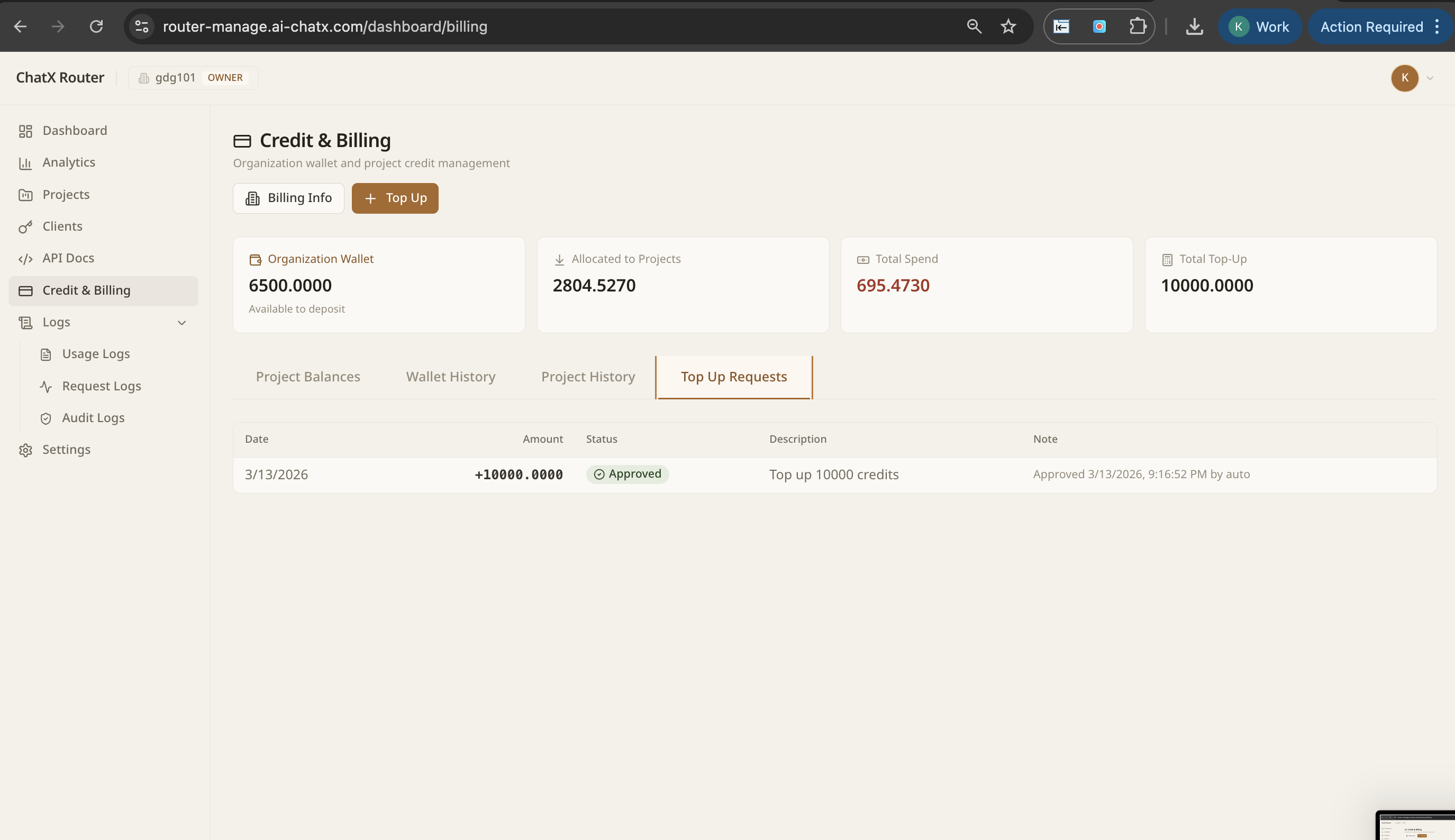

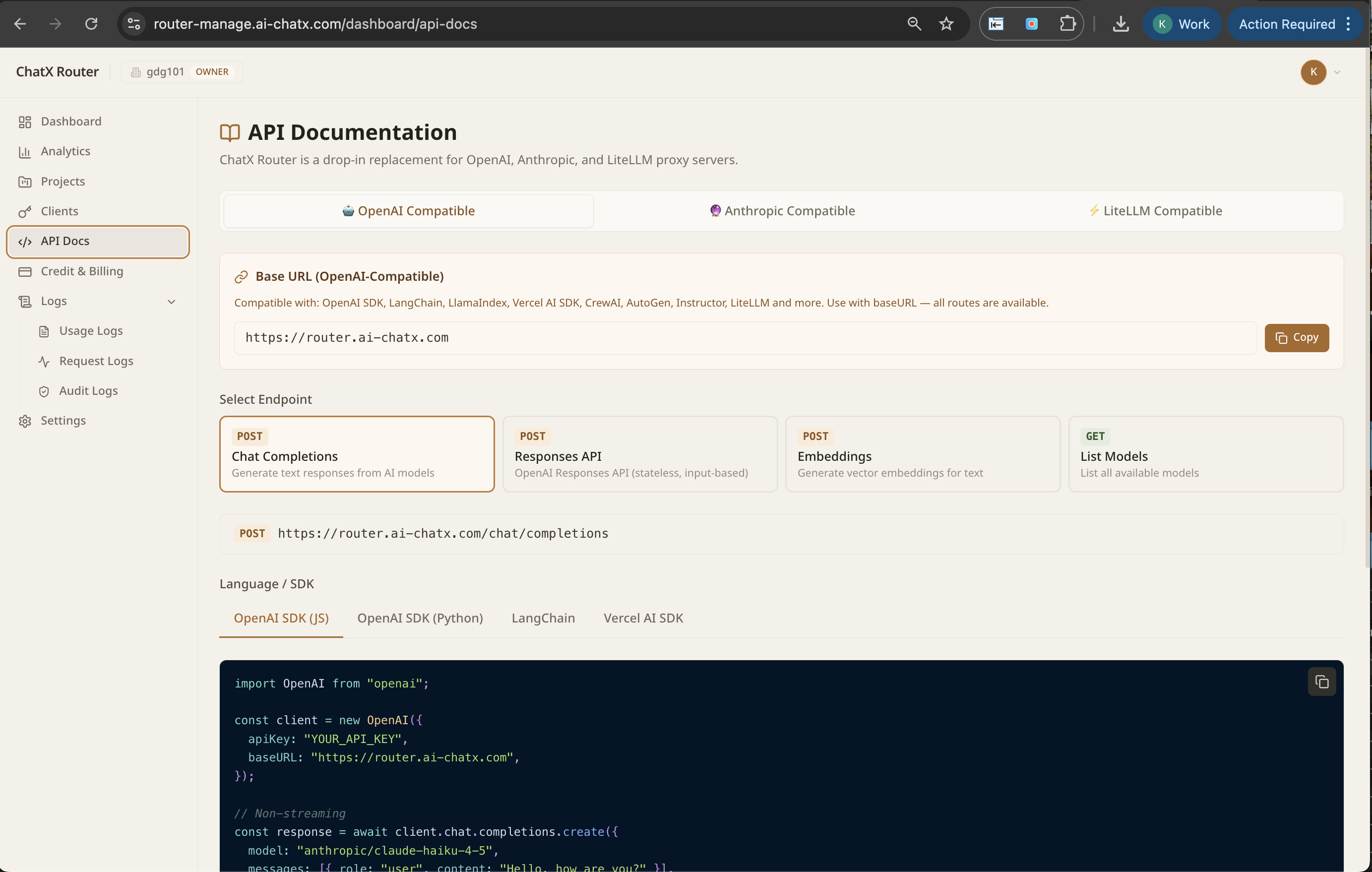

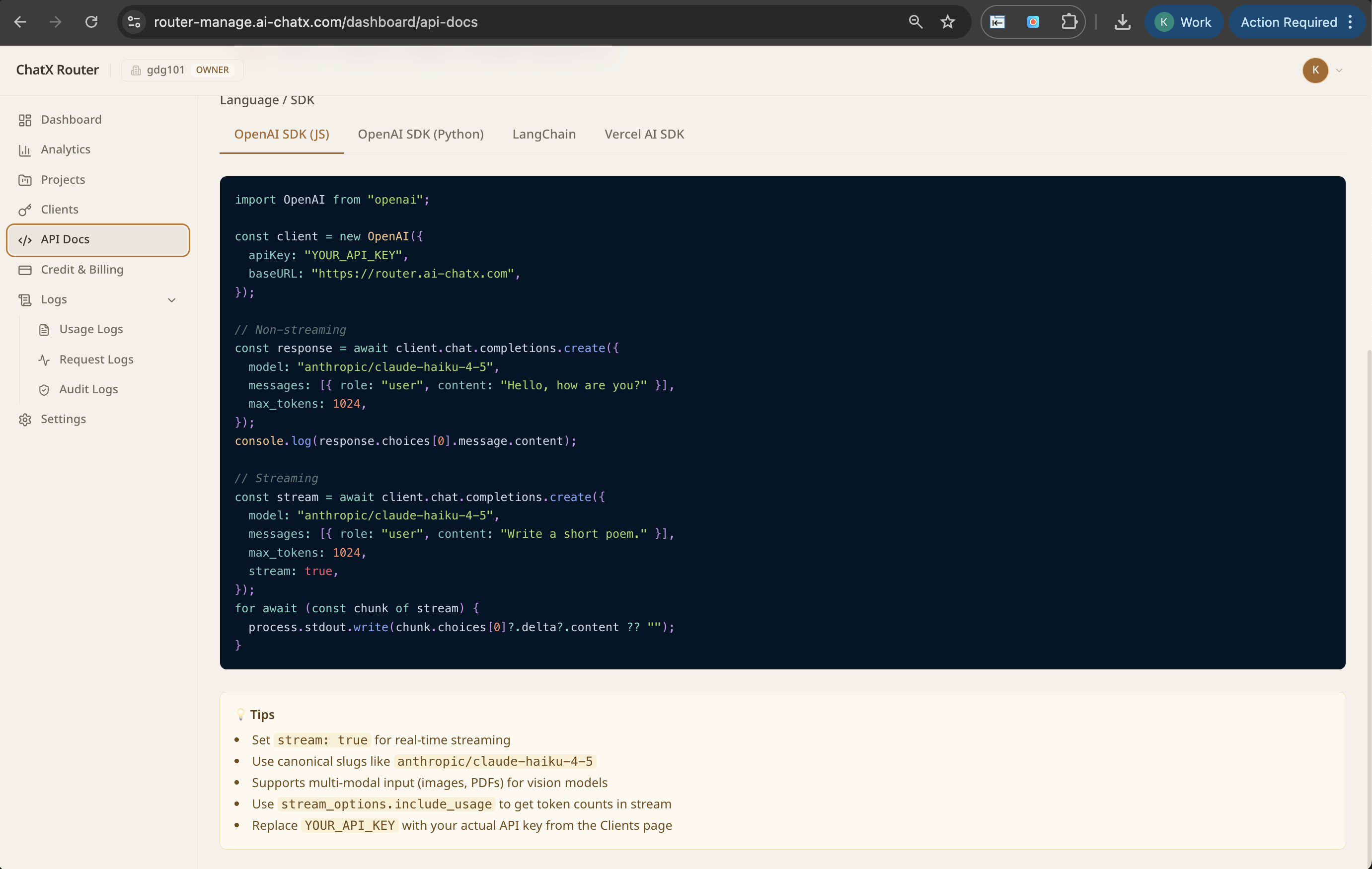

Example shows a self-hosted deployment at a custom domain (ai-chatx.com). Your NextBrain endpoint uses your own URL.

30-minute integration. Drop-in compatible with the OpenAI SDK. No rip-and-replace.

Point your application at NextBrain. Drop-in OpenAI SDK compatible.

Every request routes to the optimal model based on cost, quality, and compliance.

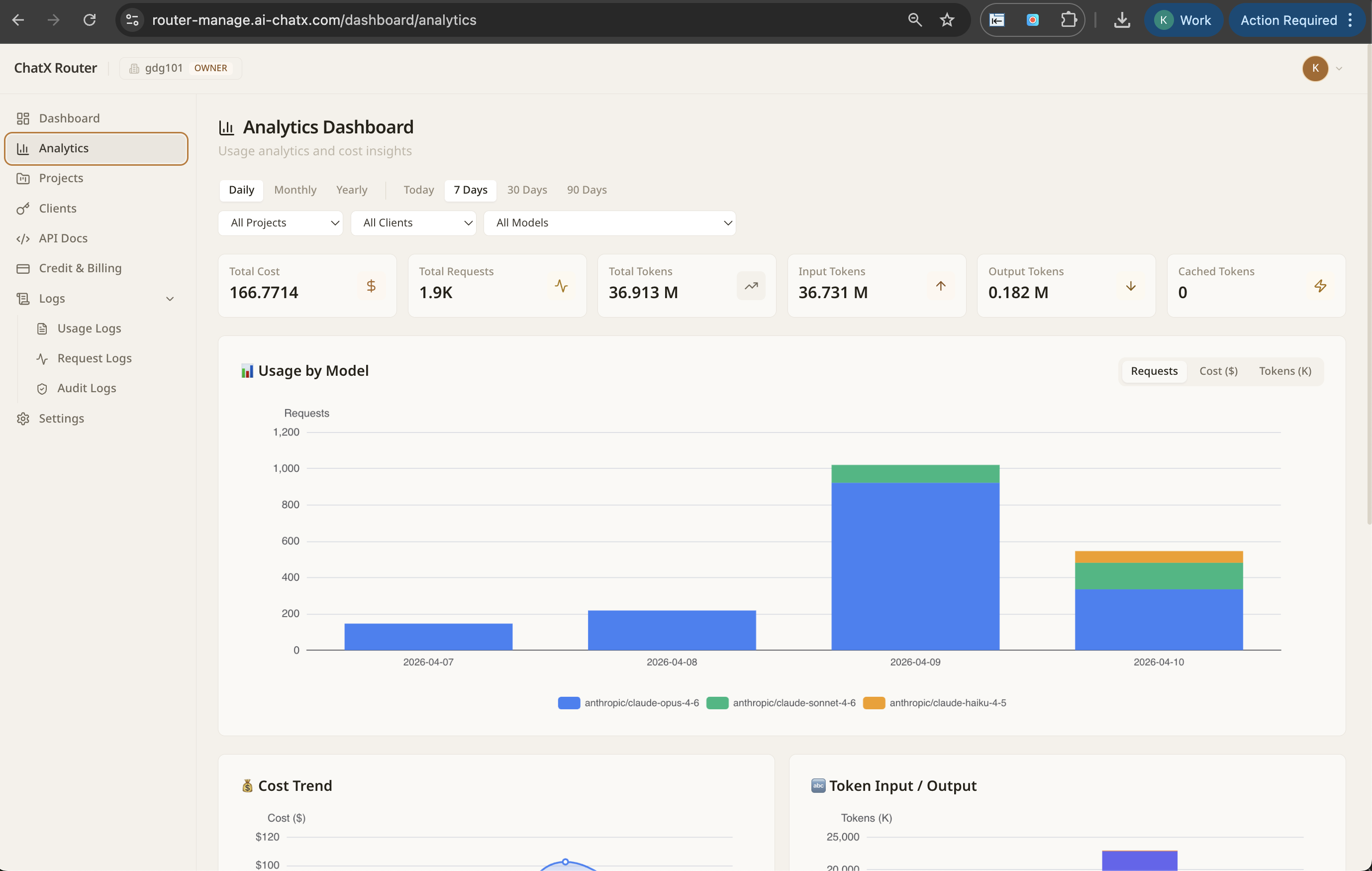

Real-time dashboard of every AI call: who, what, which model, cost.

Every step is self-service. No professional services. No lengthy onboarding. Just a clean interface your team will actually use.

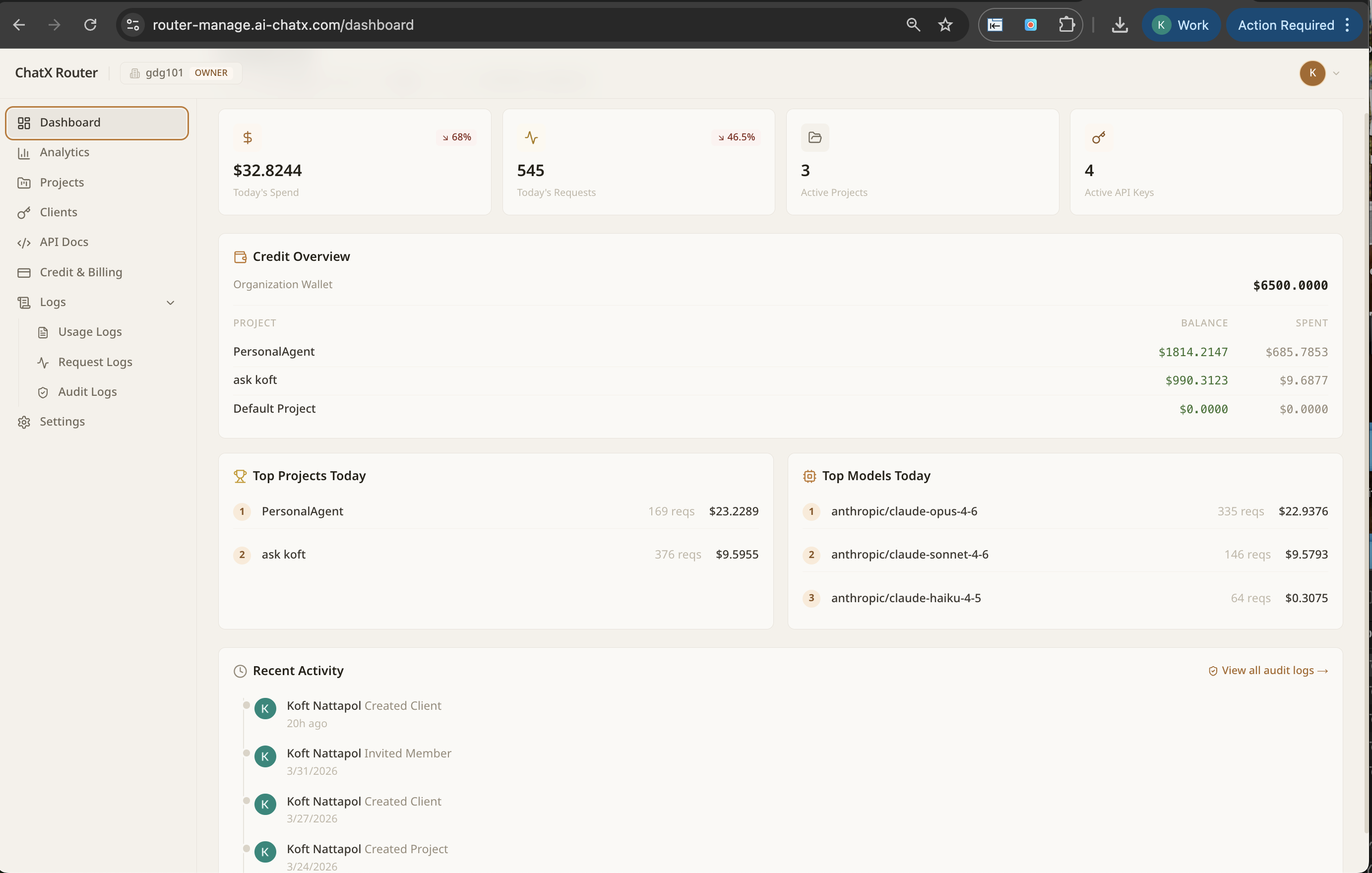

The moment you log in, you see everything: total spend today, live request count, active projects, and which models your organisation is calling most. No configuration. No waiting.

NextBrain is a drop-in replacement for OpenAI and Anthropic. Change your base_url and API key. Your existing code keeps working. Every model available through one endpoint.

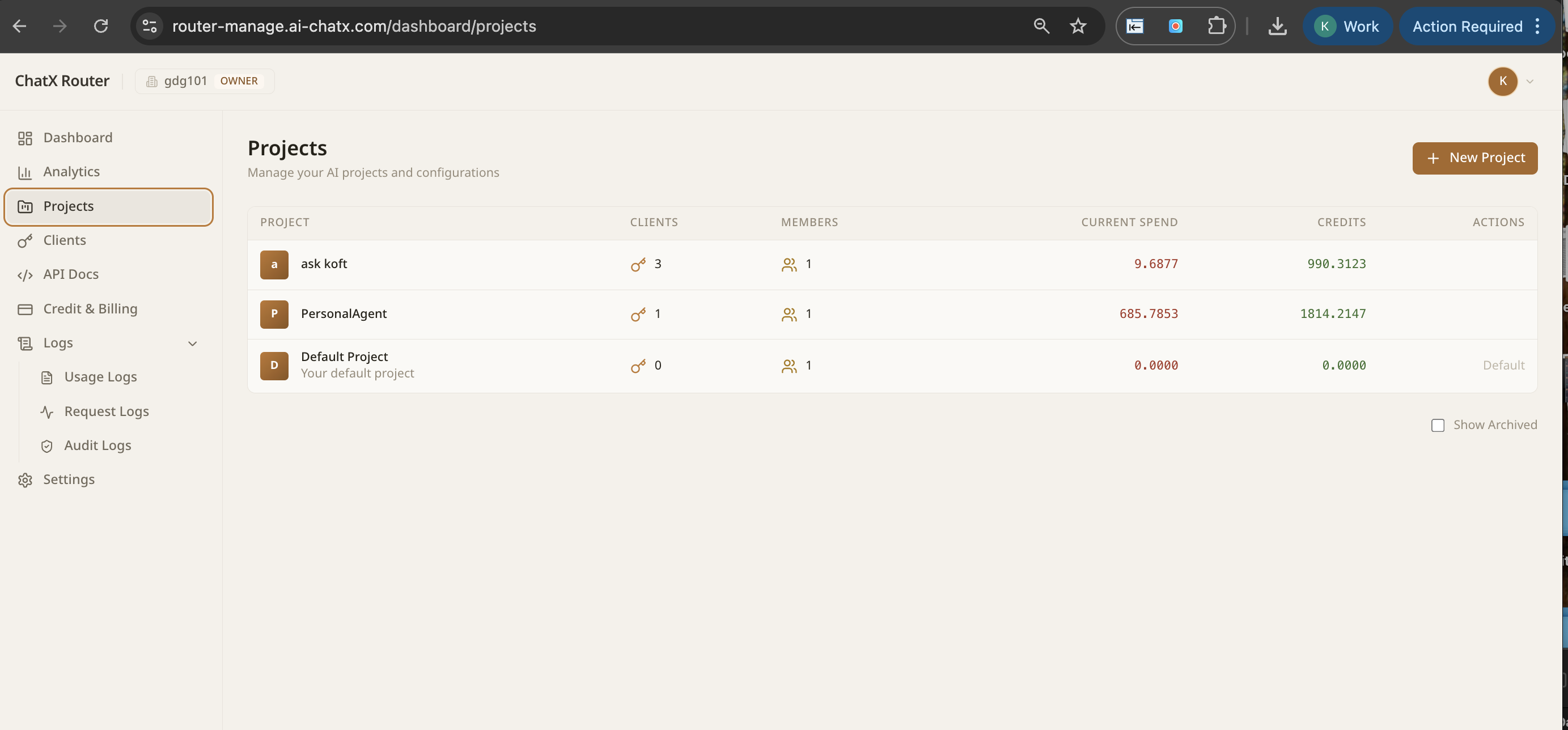

Create a project for each team or product. Every project gets its own spend tracking, client keys, and credit allocation. Individual teams see only their own.

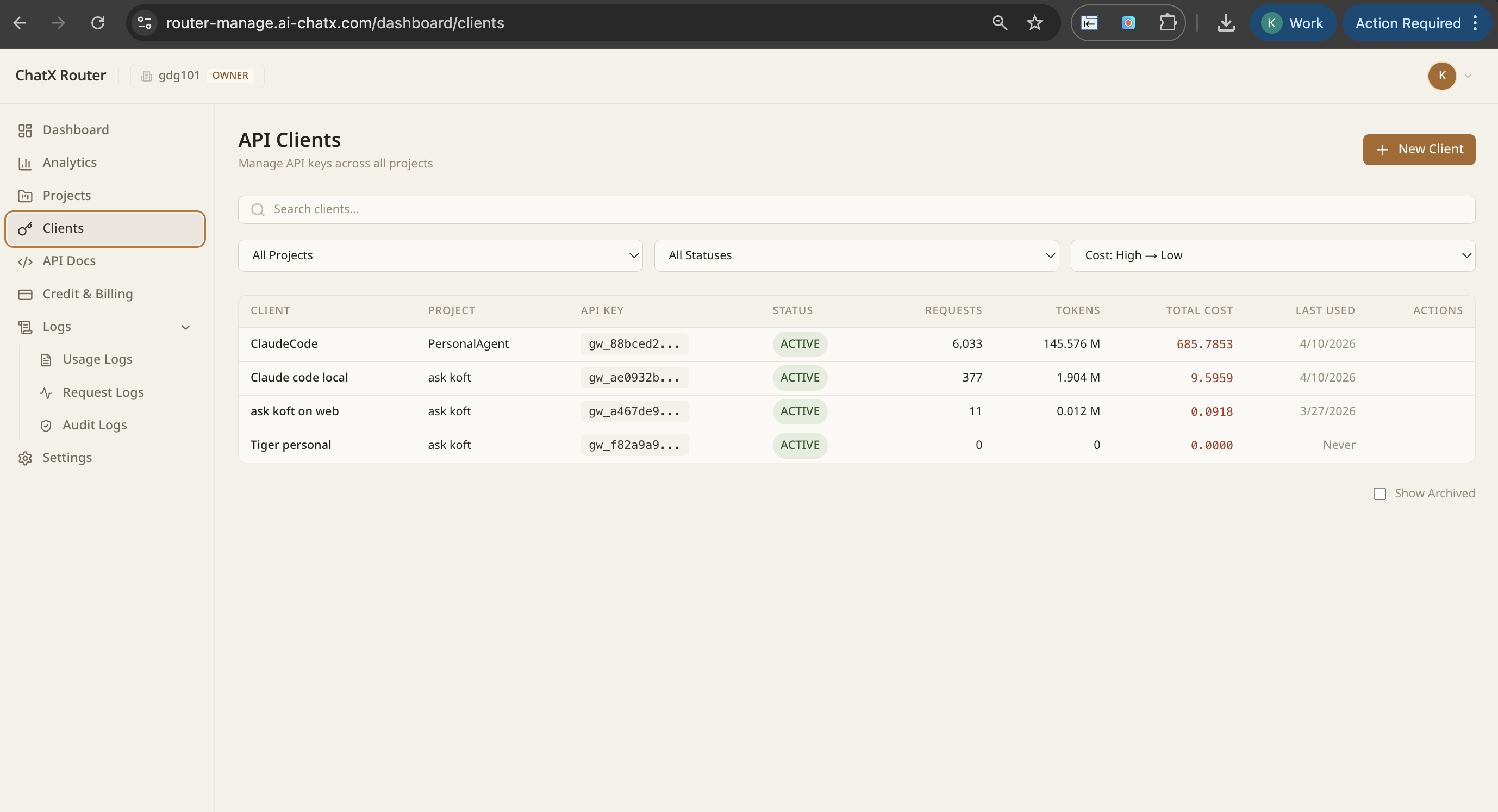

Issue API keys per client or application. See exactly how many tokens each client is consuming, what it costs, and when they last made a request. Revoke access in one click.

Usage by model over time, cost trends, token input/output ratios, and a full model-by-model breakdown. Filter by project, client, or model. Spot anomalies before they become surprises.

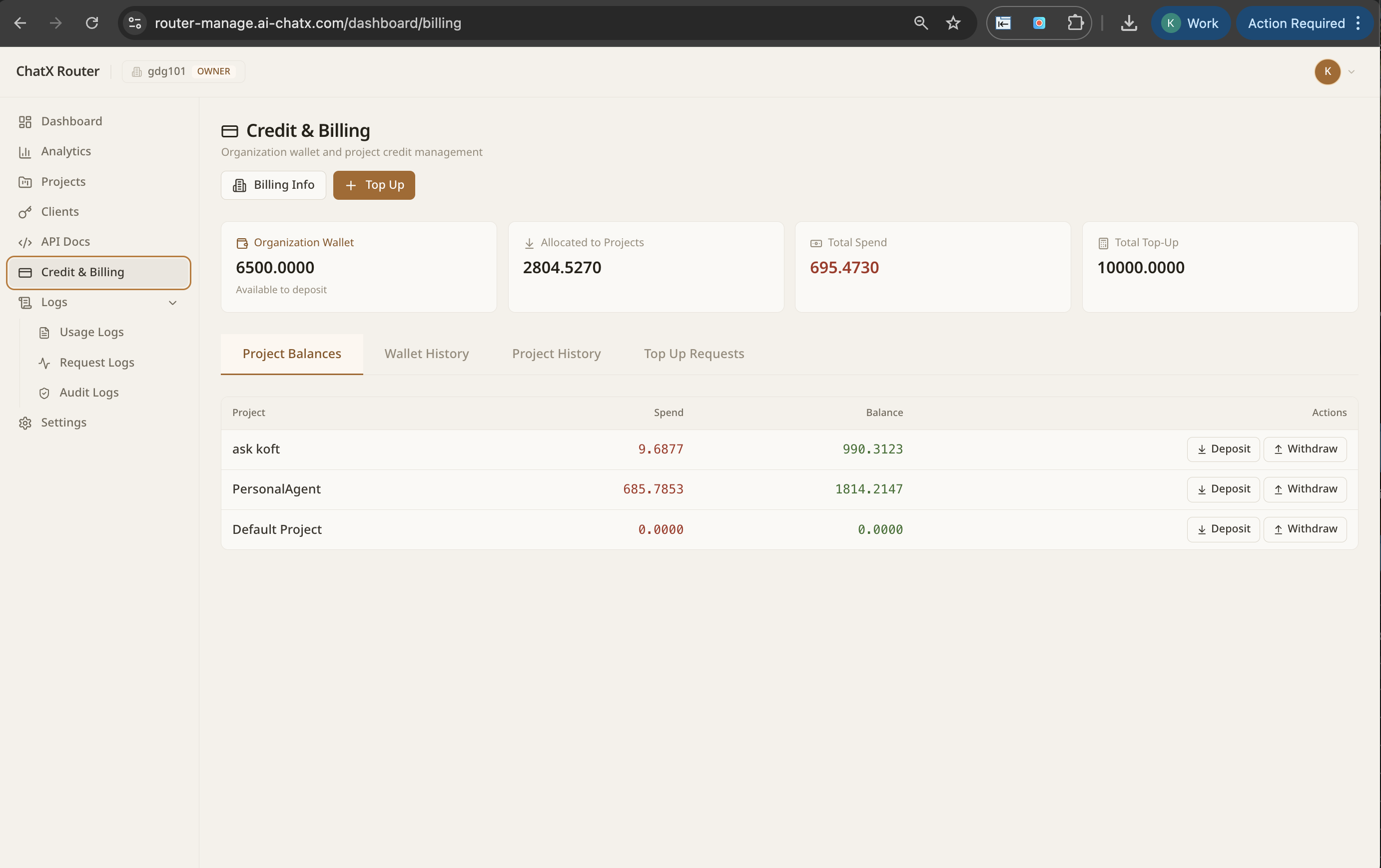

Manage a central organisation wallet. Allocate credits to projects. Top up in one click with token estimates shown upfront. Full transaction history available.

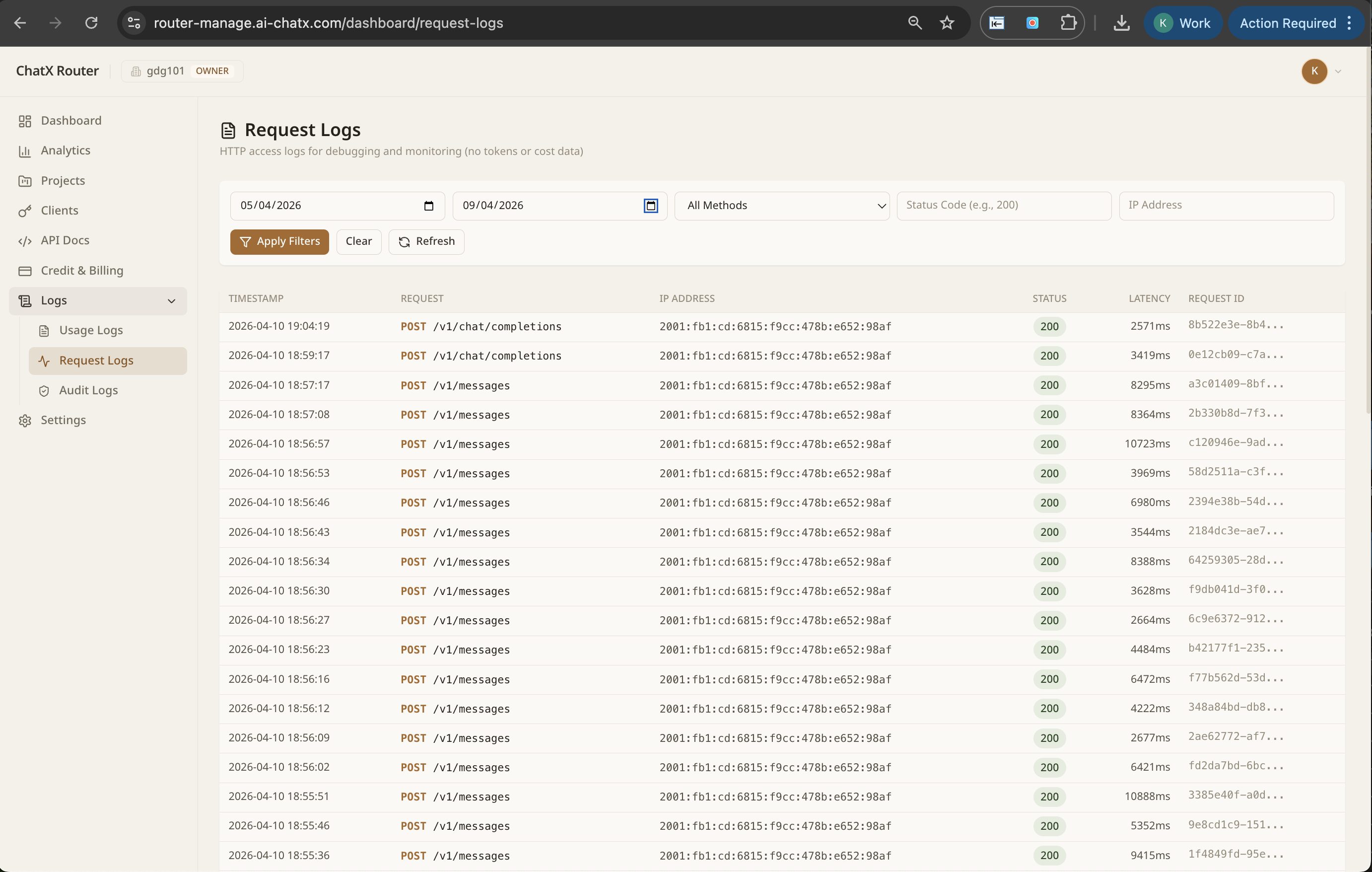

Every API request captured: timestamp, endpoint, method, status code, latency, and request ID. Filter by date range, method, or status. When something breaks, you have the full picture immediately.

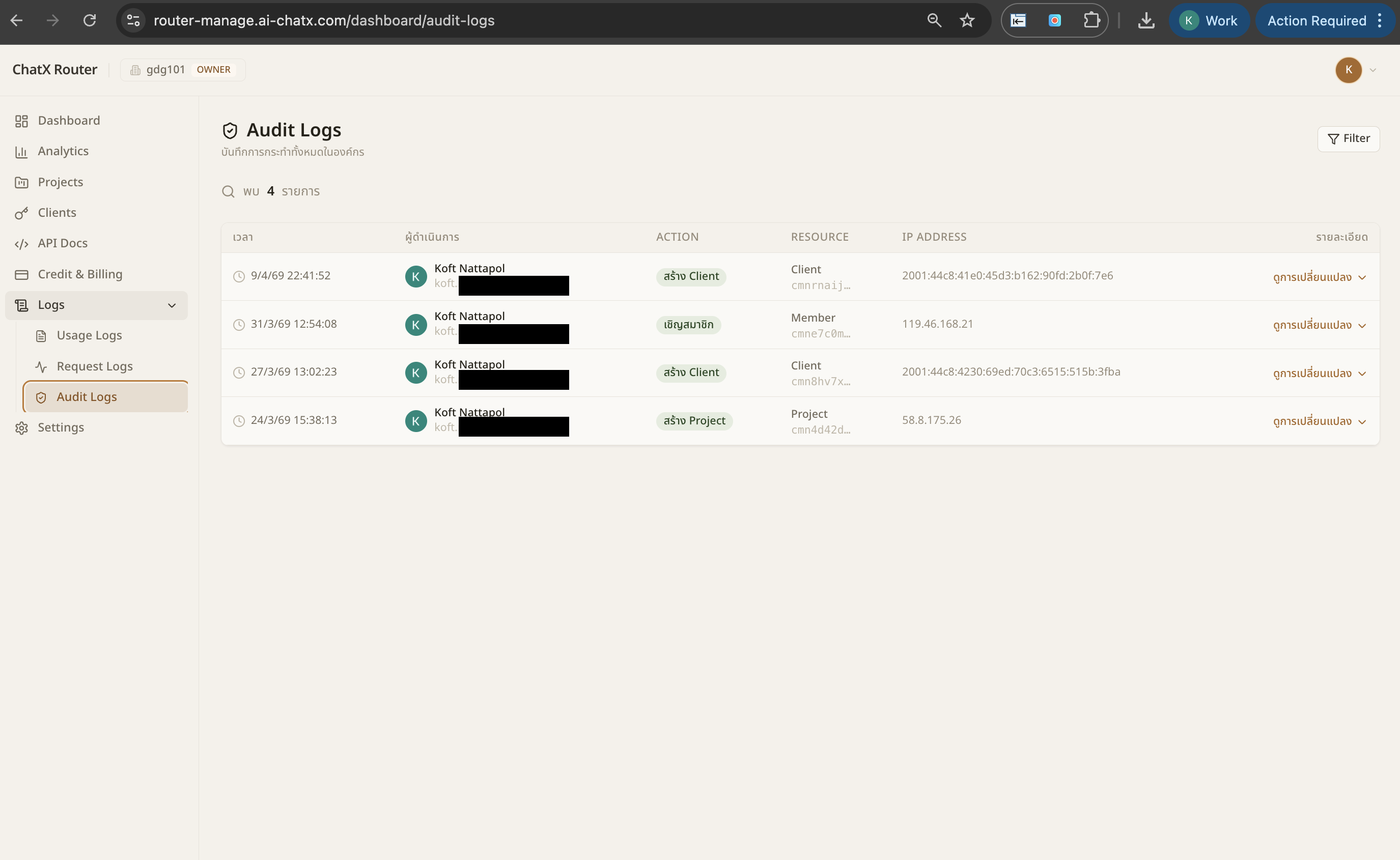

Every administrative action recorded: who created a client, who invited a member, who changed settings, with IP address and timestamp. Assign roles: Owner, Admin, Billing, or Member.

Change one line — base_url — and you're routing through NextBrain.

// Before — direct to OpenAI

const client = new OpenAI({

apiKey: "sk-..."

});

// After — one line

const client = new OpenAI({

apiKey: "nb_your-key",

baseURL: "https://router.nextbrain.me/v1"

});

// Route to any model

await client.chat.completions.create({

model: "anthropic/claude-sonnet-4-5",

messages: [...]

});

OpenAI SDK (JS + Python), Anthropic SDK, LangChain, Vercel AI SDK, LiteLLM.

Every call is authenticated, metered, routed, and logged.

Live code samples for every framework.

Per-project limits, real-time tracking, full audit trail.

Your platform team, security team, and finance team get the visibility they need to scale AI safely.

Every AI call: who, what, which model, latency, cost. One dashboard.

Data residency, access controls, PII redaction, audit logs.

Provider outage? We reroute instantly. Users never see it.

Intelligent routing saves money. Cost reduction is a byproduct.

First-class Bedrock support alongside every other provider.

Per-team keys, role-based permissions, spend limits.

A walkthrough of the NextBrain control plane — routing, observability, budget controls, live switching across models.

"I've run Go Digit — an enterprise software house in Thailand — for 15 years. In the last 18 months, every single client asked me the same question: how do we manage five different LLMs? NextBrain is my answer."

We're selecting 5 enterprises in Southeast Asia to run NextBrain in production.

In return: usage data, feedback, optional case study.

Contact us for Early Access →